Topics

- Article

- Sleep

- Sensors

Sleep Accuracy: How WHOOP Measures and Scores Sleep

When you're making decisions about your training, recovery, and sleep, the quality of your data matters. WHOOP is built on a foundation of precision—collecting heart rate data points every second, 24/7, to deliver insights you can trust. But how accurate is WHOOP, really? In this guide, we'll walk through the technology behind our sensors, the validation studies that confirm our accuracy, and the factors that can influence data quality. You'll see how precise measurement translates into better coaching and smarter daily decisions.

How WHOOP measures heart rate, HRV, and sleep with industry-leading accuracy

WHOOP is a highly accurate consumer wearable for measuring heart rate (HR) and heart rate variability (HRV). Our 24/7 data collection provides a complete picture of your cardiovascular baseline, supported by third-party research. This level of precision ensures your Strain and Recovery scores are built on a foundation of trustworthy data.

- A Central Queensland University study found WHOOP to be 99.7% accurate in measuring heart rate during sleep—levels of accuracy that surpassed all other wearables in the study. The study also found WHOOP is 99% accurate in measuring heart rate variability during sleep. Lastly, WHOOP was excellent in identifying sleep when compared to the gold-standard polysomnography (PSG) and outperformed the other devices in calculating total time spent asleep.

- Anotherstudy of leading wrist-worn wearables revealed that WHOOP was the most accurate across several categories when compared to the gold-standard PSG.

How WHOOP measures sleep with gold-standard accuracy

Third-party studies confirm this high level of accuracy. The same CQU study found that WHOOP was excellent in identifying sleep when compared to PSG and outperformed the other devices in calculating total time spent asleep. Another study of leading wrist-worn wearables revealed that WHOOP was the most accurate across several categories when compared to the gold-standard PSG. This level of precision means you can trust WHOOP to provide sleep insights that help you sleep better—whether that's adjusting your bedtime, improving sleep efficiency, and beyond.

How accurate data powers your Sleep Performance

The WHOOP Sleep Performance Score provides a clear and accurate picture of what it truly means to get a good night's sleep. Your Sleep Performance is calculated using key factors that offer a comprehensive view of your rest. Here's what's included:

- Sleep Sufficiency (Hours vs. Needed): The percentage of sleep you needed versus what you actually got the night before.

- Sleep Consistency: The timing of last night's sleep compared to the timing of sleep on the previous four days, and is a measure of how consistent your bed and wake times are.

- Sleep Efficiency: The percentage of your time in bed that you actually spent asleep.

- Sleep Stress: How much time you spent in high stress throughout the night.

By integrating these components, the Sleep Performance Score provides a holistic view of both the quantity and quality of sleep for recommendations tailored to your habits, schedule, and goals. Use Sleep Planner for coaching to improve your Sleep Performance and help you establish a nightly routine by determining the best time for you to go to bed and to wake feeling refreshed. Find it on the Home screen by tapping "Tonight's Sleep".

The technology behind WHOOP sleep accuracy

WHOOP was designed from the ground up to provide the most accurate data possible using advanced sensor technology. We collect hundreds of data points per second from our 3-axis accelerometer, 3-axis gyroscope, and PPG sensor.

PPG, or photoplethysmography, is a technique that involves measuring blood flow by assessing subtle changes in blood volume in capillaries close to your skin.

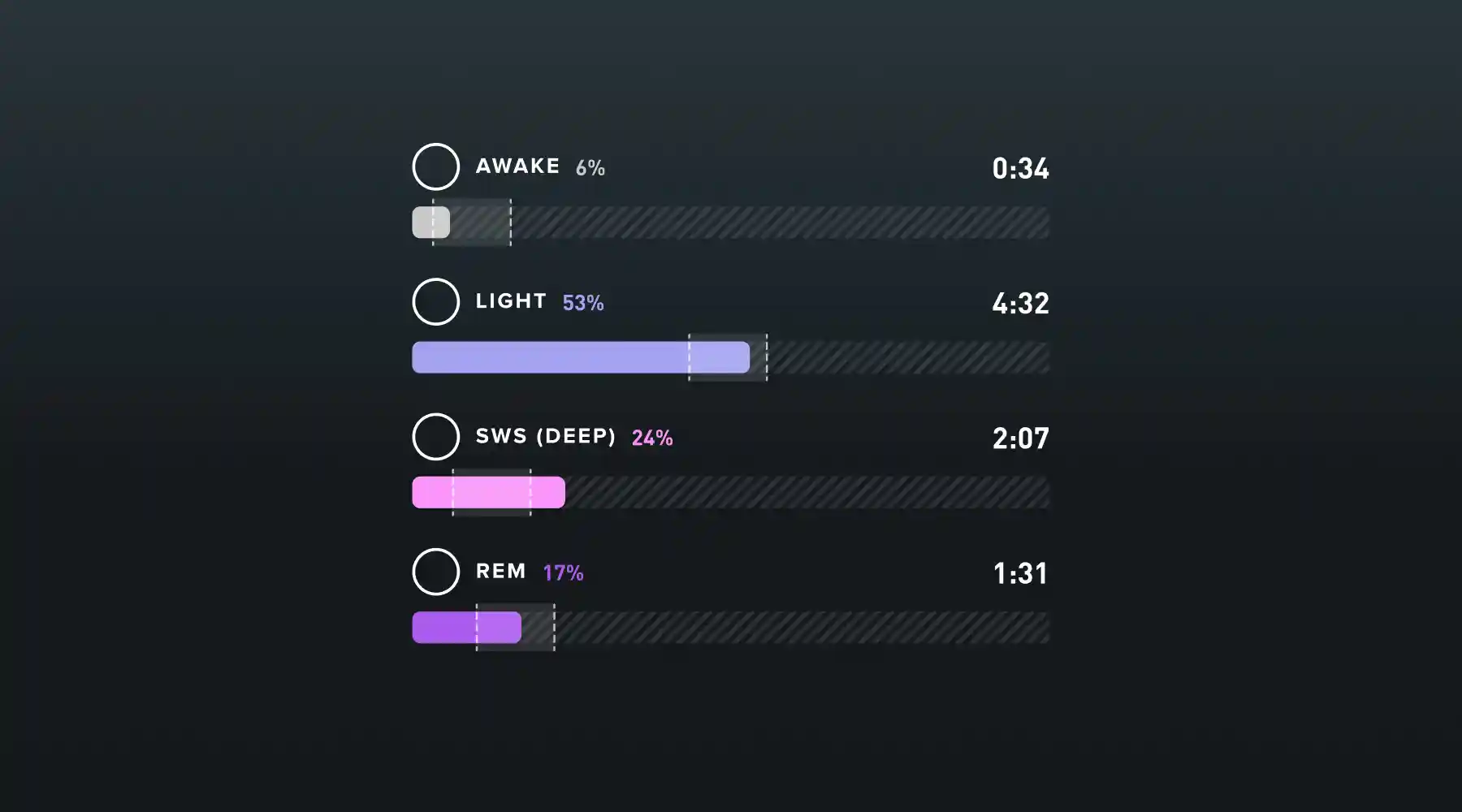

In a PSG sleep study, subjects undergo simultaneous electrocardiogram (EKG), electrooculogram (EOG), electroencephalogram (EEG), and electromyogram (EMG) recordings. Trained technicians then interpret these results, sorting 30-second intervals of data—called an epoch—into one of four sleep stages: Wake, Light, REM, and Slow Wave. While PSG is the gold standard and the most accurate known way to determine sleep stages, it is also expensive, cumbersome, and intrusive. WHOOP used this PSG and WHOOP data to train machine learning models to accurately detect the different sleep stages.

If you’ve ever wondered what the tiny green lights are at the bottom of your WHOOP, they are a very important part of PPG. Between the two green lights, there's a small photo-receptor which measures light. When you shine specific colors (wavelengths) of light onto the skin, blood volume can be measured by looking at the light reflected back from your skin since blood absorbs specific colors and reflects others. Once blood flow is measured, we can then derive heart rate, heart rate variability, and respiratory rate, all of which are used in our sleep staging algorithms.

Ensuring data quality during exercise and daily life

While WHOOP is built for precision, a few external factors can influence data quality. To ensure you get the most accurate readings, consider the following:

- Device Fit: For optimal sensor contact, wear your WHOOP snugly, about one inch above your wrist bone.

- Activity Type: During activities with significant wrist movement (e.g., weightlifting or boxing), data quality can be affected.

- Sensor Placement: For these activities, use WHOOP Body with Any-Wear Technology to move the sensor to a more stable location like your torso or bicep.

For more detailed guidance, visit our support article on What Affects WHOOP Accuracy.

From accurate data to better performance

Accurate data is the first step. The real power of WHOOP is turning that data into actionable insights that guide your daily behaviors. By trusting the numbers, you can train smarter, recover faster, and sleep better. This commitment to precision is what allows WHOOP to be your personalized coach, helping you unlock your potential and sustain it over the long term. Ready to see what precise, personalized insights can do for you? Join WHOOP.

Frequently asked questions about WHOOP accuracy

Is WHOOP medically accurate?

WHOOP is a consumer wellness product designed to help you optimize your performance and is not a medical device intended to diagnose, treat, or prevent any disease. Always consult a physician for medical advice.

Can my WHOOP data be wrong?

While WHOOP is validated for accuracy, external factors like an improper fit or extreme wrist motion during certain exercises can affect data quality. For best results, ensure your device is snug and consider using WHOOP Body apparel for activities that involve a lot of wrist movement. Learn more in our guide on What Affects WHOOP Accuracy.

How does WHOOP accuracy compare to other wearables?

Multiple third-party studies have shown WHOOP to be a top-performing and highly accurate consumer wearable for measuring heart rate, heart rate variability, and sleep. Our focus on 24/7 data collection and advanced sensor technology is designed to provide best-in-class precision across the metrics that matter most for your health and performance.